The tasks should also not store any authentication parameters such as passwords or token inside them. If possible, use ``XCom`` to communicate small messages between tasks and a good way of passing larger data between tasks is to use a remote storage such as S3/HDFS.įor example, if we have a task that stores processed data in S3 that task can push the S3 path for the output data in ``Xcom``,Īnd the downstream tasks can pull the path from XCom and use it to read the data. Storing a file on disk can make retries harder e.g., your task requires a config file that is deleted by another task in DAG. Therefore, you should not store any file or config in the local filesystem as the next task is likely to run on a different server without access to it - for example, a task that downloads the data file that the next task processes. If that is not desired, please create a new DAG.Īirflow executes tasks of a DAG on different servers in case you are using :doc:`Kubernetes executor ` or :doc:`Celery executor `. It difficult to check the logs of that Task from the Webserver. You would not be able to see the Task in Graph View, Grid View, etc making Used by all operators that use this connection type.īe careful when deleting a task from a DAG. Tasks, so you can declare a connection only once in ``default_args`` (for example ``gcp_conn_id``) and it is automatically Also, most connection types have unique parameter names in The ``default_args`` help to avoid mistakes such as typographical errors. You should define repetitive parameters such as ``connection_id`` or S3 paths in ``default_args`` rather than declaring them for each task. It, for example, to generate a temporary log. Thisįunction should never be used inside a task, especially to do the criticalĬomputation, as it leads to different outcomes on each run. * The Python datetime ``now()`` function gives the current datetime object. You shouldįollow this partitioning method while writing data in S3/HDFS as well. You can use ``data_interval_start`` as a partition.

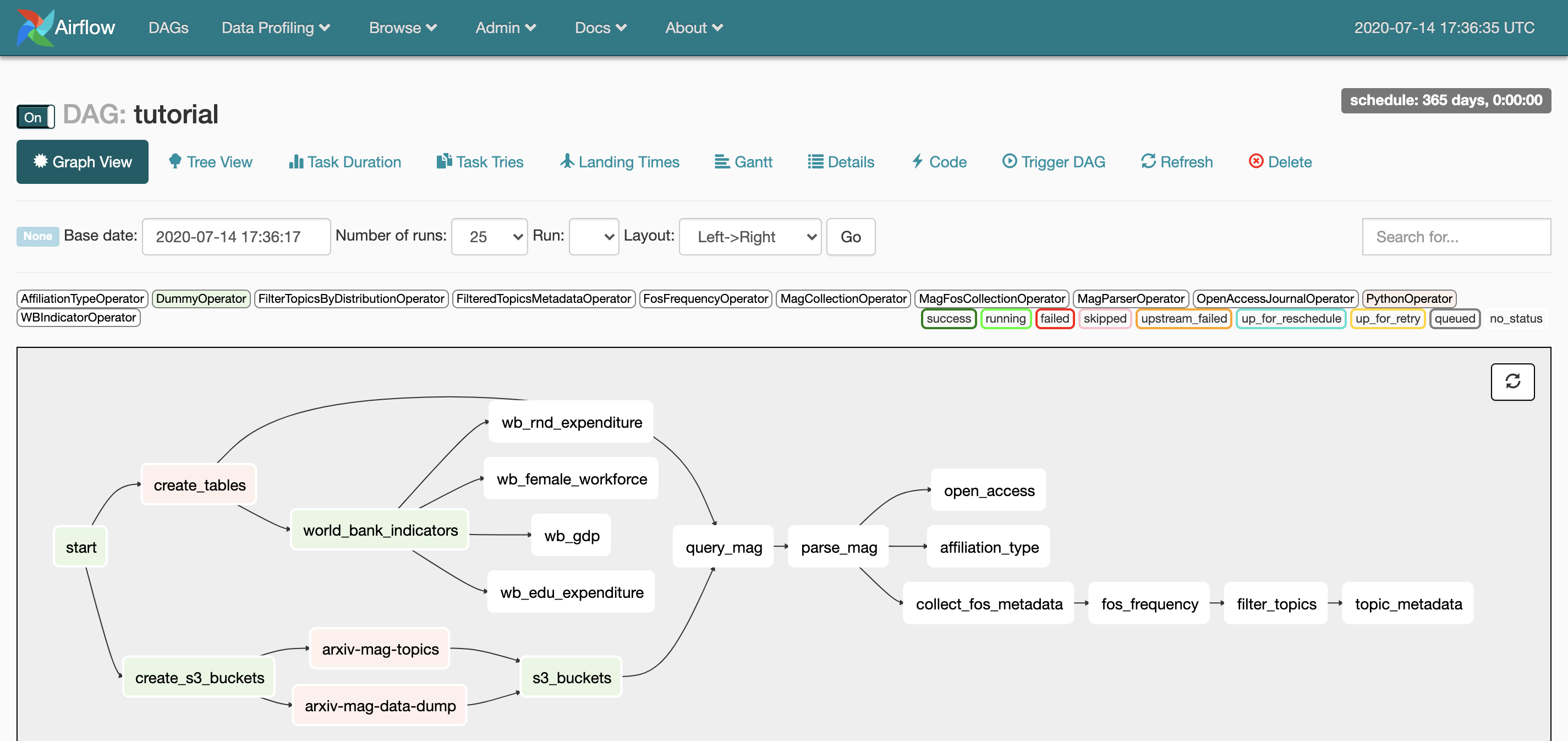

A better way is to read the input data from a specific Someone may update the input data between re-runs, which results inĭifferent outputs. * Read and write in a specific partition. * Do not use INSERT during a task re-run, an INSERT statement might lead toĭuplicate rows in your database. Some of the ways you can avoid producing a different AnĮxample is not to produce incomplete data in ``HDFS`` or ``S3`` at the end of aĪirflow can retry a task if it fails. Implies that you should never produce incomplete results from your tasks. You should treat tasks in Airflow equivalent to transactions in a database. Please follow our guide on :ref:`custom Operators `. To ensure the DAG run or failure does not produce unexpected results. However, there are many things that you need to take care of This tutorial will introduce you to the best practices for these three steps.Ĭreating a new DAG in Airflow is quite simple. configuring environment dependencies to run your DAG testing if the code meets our expectations, writing Python code to create a DAG object, Specific language governing permissions and limitationsĬreating a new DAG is a three-step process: "AS IS" BASIS, WITHOUT WARRANTIES OR CONDITIONS OF ANY Software distributed under the License is distributed on an Unless required by applicable law or agreed to in writing, "License") you may not use this file except in compliance To you under the Apache License, Version 2.0 (the See the NOTICE fileĭistributed with this work for additional information Licensed to the Apache Software Foundation (ASF) under one

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed